What Happened

On Thursday, OpenAI announced it had developed a large language model specifically trained on common biology workflows.

Table of Contents

Why It Matters

Called GPT-Rosalind after Rosalind Franklin, the model appears to differ from most science-focused models from major tech companies, which have generally taken a more generic approach that works for various fields.

Key Details

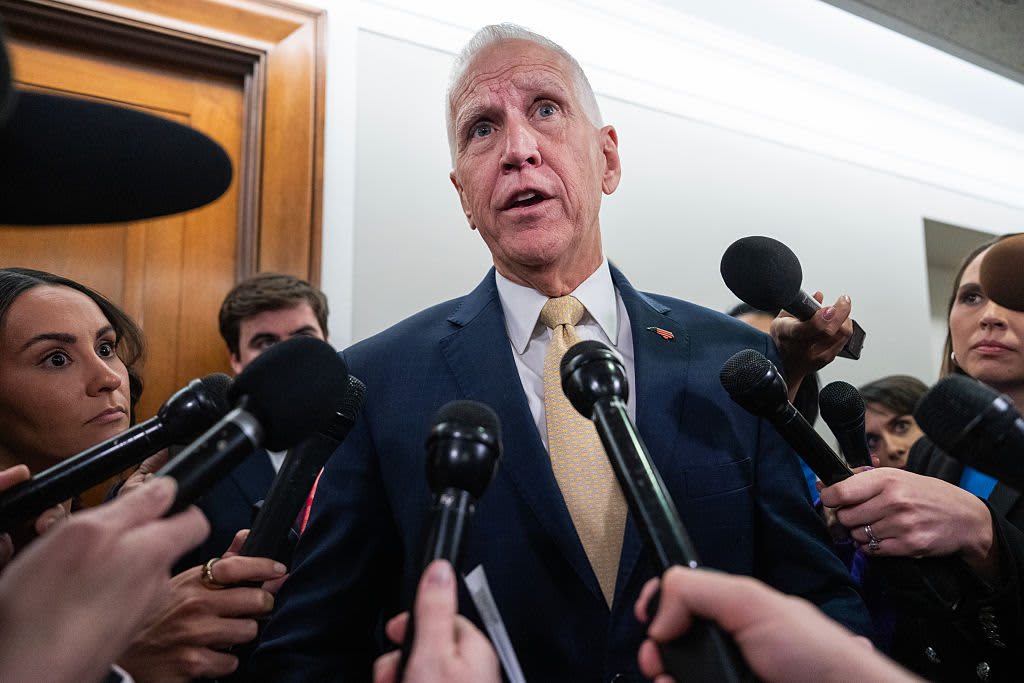

- In a press briefing, Yunyun Wang, OpenAI's Life Sciences Product Lead, said the system was designed to tackle two major roadblocks faced by current biology researchers.

- One is the massive datasets created by decades of genome sequencing and protein biochemistry, which can be too much for any one researcher to take in.

- The second is that biology has many highly specialized subfields, each with its own techniques and jargon.

- So, for example, a geneticist who finds themselves working on a gene that's active in brain cells might struggle to understand the immense neurobiological literature.

Background Context

On Thursday, OpenAI announced it had developed a large language model specifically trained on common biology workflows. Called GPT-Rosalind after Rosalind Franklin, the model appears to differ from most science-focused models from major tech companies, which have generally taken a more generic approach that works for various fields. In a press briefing, Yunyun Wang, OpenAI's Life Sciences Product Lead, said the system was designed to tackle two major roadblocks faced by current biology researchers. One is the massive datasets created by decades of genome sequencing and protein biochemistry, which can be too much for any one researcher to take in. The second is that biology has many highly sp

What To Watch Next

Track official statements, independent verification, and regional impact updates in the next 24 to 48 hours.

Editorial Next Step

Add your local context, fact checks, quotes, and analysis before or after publication.

Source: Ars Technica – All content – Original Link

Source: Ars Technica – All content