What Happened

Even if you don't know much about the inner workings of generative AI models, you probably know they need a lot of memory.

Why It Matters

Hence, it is currently almost impossible to buy a measly stick of RAM without getting fleeced.

Key Details

- Google Research recently revealed TurboQuant, a compression algorithm that reduces the memory footprint of large language models (LLMs) while also boosting speed and maintaining accuracy.

- TurboQuant is aimed at reducing the size of the key-value cache, which Google likens to a "digital cheat sheet" that stores important information so it doesn't have to be recomputed.

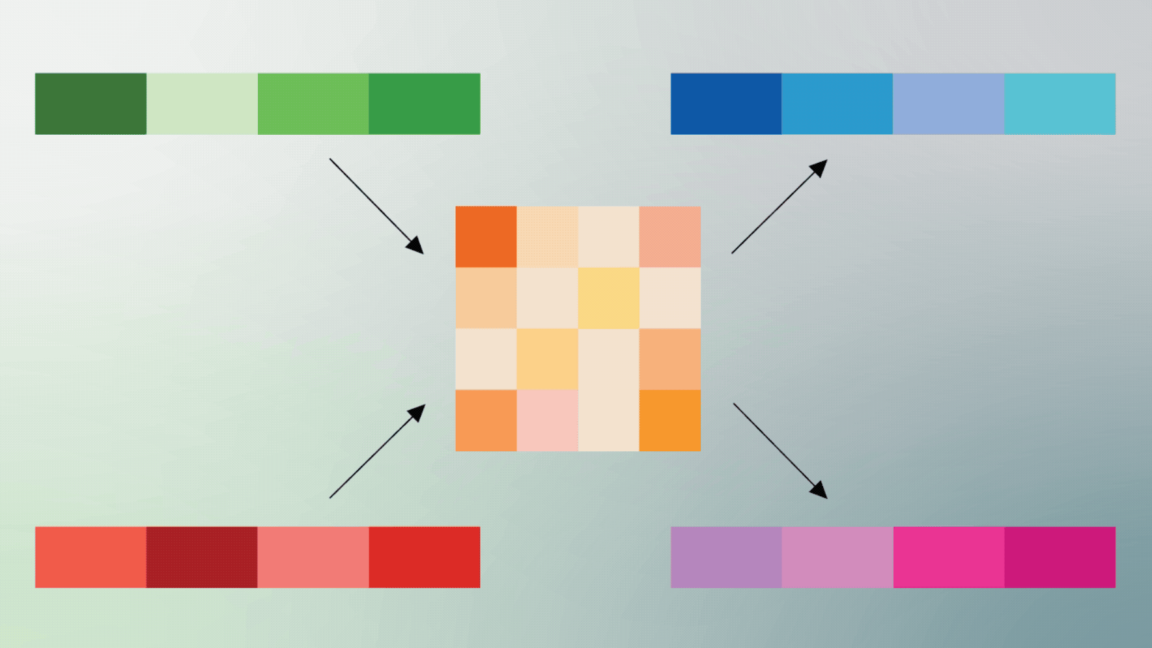

- This cheat sheet is necessary because, as we say all the time, LLMs don't actually know anything; they can do a good impression of knowing things through the use of vectors, which map the semantic meaning of tokenized text.

- When two vectors are similar, that means they have conceptual similarity.

Background Context

Even if you don't know much about the inner workings of generative AI models, you probably know they need a lot of memory. Hence, it is currently almost impossible to buy a measly stick of RAM without getting fleeced. Google Research recently revealed TurboQuant, a compression algorithm that reduces the memory footprint of large language models (LLMs) while also boosting speed and maintaining accuracy. TurboQuant is aimed at reducing the size of the key-value cache, which Google likens to a "digital cheat sheet" that stores important information so it doesn't have to be recomputed. This cheat sheet is necessary because, as we say all the time, LLMs don't actually know anything; they can do a

What To Watch Next

Track official statements, independent verification, and regional impact updates in the next 24 to 48 hours.

Editorial Next Step

Add your local context, fact checks, quotes, and analysis before or after publication.

Source: Ars Technica – All content – Original Link

Source: Ars Technica – All content