What Happened

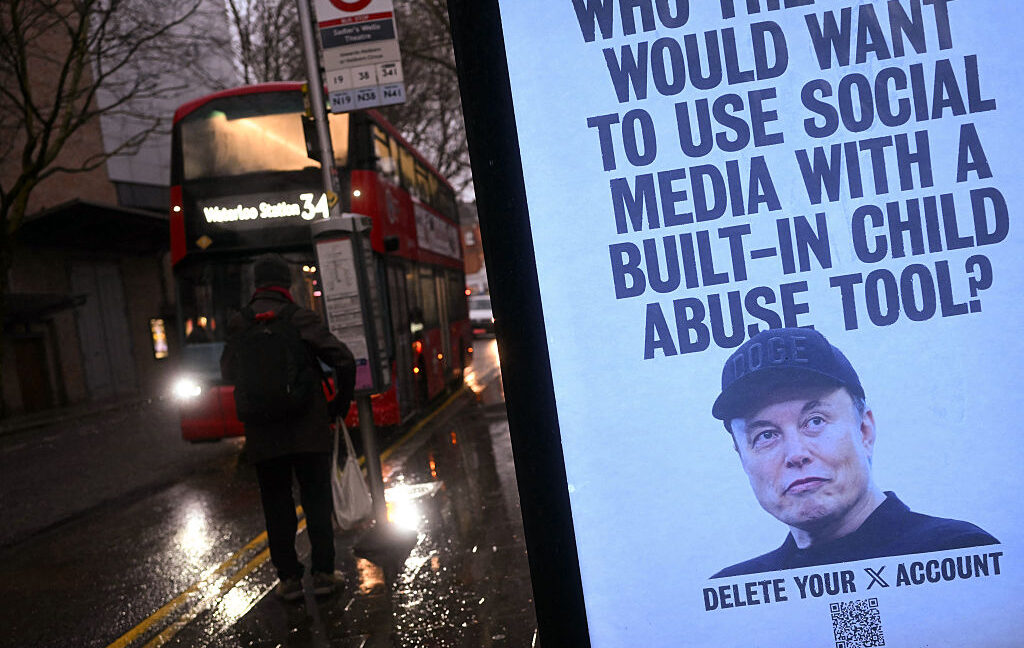

A tip from an anonymous Discord user led cops to find what may be the first confirmed Grok-generated child sexual abuse materials (CSAM) that Elon Musk's xAI can't easily dismiss as nonexistent.

Why It Matters

As recently as January, Musk denied that Grok generated any CSAM during a scandal in which xAI refused to update filters to block the chatbot from nudifying images of real people.

Key Details

- At the height of the controversy, researchers from the Center for Countering Digital Hate estimated that Grok generated approximately three million sexualized images, of which about 23,000 images depicted apparent children.

- Rather than fix Grok, xAI limited access to the system to paying subscribers.

- That kept the most shocking outputs from circulating on X, but the worst of it was not posted there, Wired reported.Read full article Comments

Background Context

A tip from an anonymous Discord user led cops to find what may be the first confirmed Grok-generated child sexual abuse materials (CSAM) that Elon Musk's xAI can't easily dismiss as nonexistent. As recently as January, Musk denied that Grok generated any CSAM during a scandal in which xAI refused to update filters to block the chatbot from nudifying images of real people. At the height of the controversy, researchers from the Center for Countering Digital Hate estimated that Grok generated approximately three million sexualized images, of which about 23,000 images depicted apparent children. Rather than fix Grok, xAI limited access to the system to paying subscribers. That kept the most shoc

What To Watch Next

Track official statements, independent verification, and regional impact updates in the next 24 to 48 hours.

Editorial Next Step

Add your local context, fact checks, quotes, and analysis before or after publication.

Source: Ars Technica – All content – Original Link

Source: Ars Technica – All content